- 添加 Ollama 大模型翻译和 Google 翻译(非实时),支持多种语言 - 为 Vosk 引擎添加非实时翻译 - 为新增的翻译功能添加和修改接口 - 修改 Electron 构建配置,之后不同平台构建无需修改构建文件

auto-caption

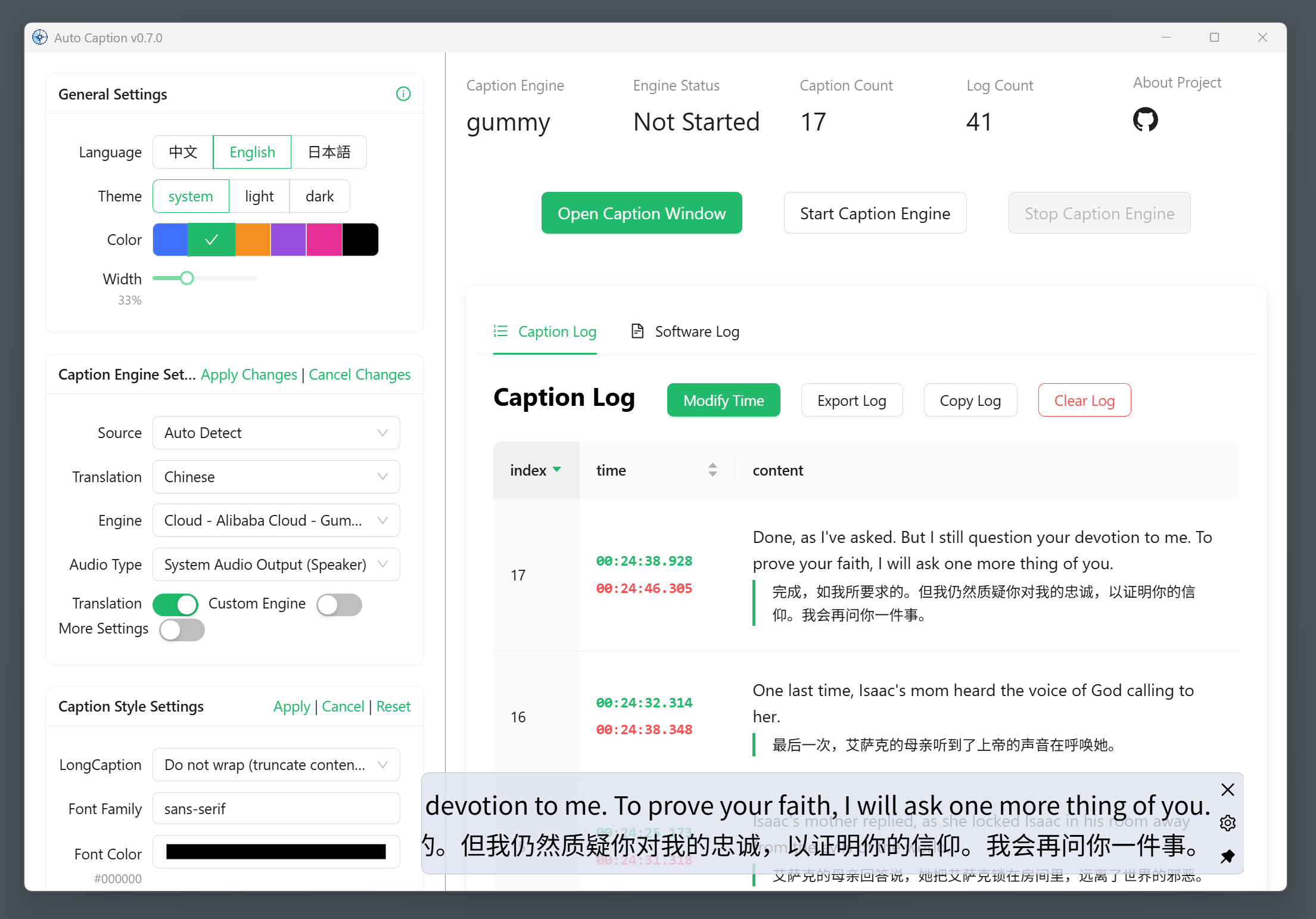

Auto Caption is a cross-platform real-time caption display software.

Version 0.7.0 has been released, imporving the software interface and adding software log display. The local caption engine is under development and is expected to be released in the form of Python code...

📥 Download

📚 Documentation

Project API Documentation (Chinese)

✨ Features

- Generate captions from audio output or microphone input

- Cross-platform (Windows, macOS, Linux) and multi-language interface (Chinese, English, Japanese) support

- Rich caption style settings (font, font size, font weight, font color, background color, etc.)

- Flexible caption engine selection (Alibaba Cloud Gummy cloud model, local Vosk model, self-developed model)

- Multi-language recognition and translation (see below "⚙️ Built-in Subtitle Engines")

- Subtitle record display and export (supports exporting

.srtand.jsonformats)

📖 Basic Usage

The software has been adapted for Windows, macOS, and Linux platforms. The tested platform information is as follows:

| OS Version | Architecture | System Audio Input | System Audio Output |

|---|---|---|---|

| Windows 11 24H2 | x64 | ✅ | ✅ |

| macOS Sequoia 15.5 | arm64 | ✅ Additional config required | ✅ |

| Ubuntu 24.04.2 | x64 | ✅ | ✅ |

| Kali Linux 2022.3 | x64 | ✅ | ✅ |

| Kylin Server V10 SP3 | x64 | ✅ | ✅ |

Additional configuration is required to capture system audio output on macOS and Linux platforms. See Auto Caption User Manual for details.

The international version of Alibaba Cloud services does not provide the Gummy model, so non-Chinese users currently cannot use the Gummy caption engine.

To use the default Gummy caption engine (which uses cloud-based models for speech recognition and translation), you first need to obtain an API KEY from the Alibaba Cloud Bailian platform. Then add the API KEY to the software settings or configure it in environment variables (only Windows platform supports reading API KEY from environment variables) to properly use this model. Related tutorials:

The recognition performance of Vosk models is suboptimal, please use with caution.

To use the Vosk local caption engine, first download your required model from Vosk Models page, extract the model locally, and add the model folder path to the software settings. Currently, the Vosk caption engine does not support translated captions.

If you find the above caption engines don't meet your needs and you know Python, you may consider developing your own caption engine. For detailed instructions, please refer to the Caption Engine Documentation.

⚙️ Built-in Subtitle Engines

Currently, the software comes with 2 subtitle engines, with new engines under development. Their detailed information is as follows.

Gummy Subtitle Engine (Cloud)

Developed based on Tongyi Lab's Gummy Speech Translation Model, using Alibaba Cloud Bailian API to call this cloud model.

Model Parameters:

- Supported audio sample rate: 16kHz and above

- Audio sample depth: 16bit

- Supported audio channels: Mono

- Recognizable languages: Chinese, English, Japanese, Korean, German, French, Russian, Italian, Spanish

- Supported translations:

- Chinese → English, Japanese, Korean

- English → Chinese, Japanese, Korean

- Japanese, Korean, German, French, Russian, Italian, Spanish → Chinese or English

Network Traffic Consumption:

The subtitle engine uses native sample rate (assumed to be 48kHz) for sampling, with 16bit sample depth and mono channel, so the upload rate is approximately:

48000\ \text{samples/second} \times 2\ \text{bytes/sample} \times 1\ \text{channel} = 93.75\ \text{KB/s}

The engine only uploads data when receiving audio streams, so the actual upload rate may be lower. The return traffic consumption of model results is small and not considered here.

Vosk Subtitle Engine (Local)

Developed based on vosk-api. Currently only supports generating original text from audio, does not support translation content.

Planned New Subtitle Engines

The following are candidate models that will be selected based on model performance and ease of integration.

🚀 Project Setup

Install Dependencies

npm install

Build Subtitle Engine

First enter the engine folder and execute the following commands to create a virtual environment (requires Python 3.10 or higher, with Python 3.12 recommended):

# in ./engine folder

python -m venv .venv

# or

python3 -m venv .venv

Then activate the virtual environment:

# Windows

.venv/Scripts/activate

# Linux or macOS

source .venv/bin/activate

Then install dependencies (this step might result in errors on macOS and Linux, usually due to build failures, and you need to handle them based on the error messages):

pip install -r requirements.txt

If you encounter errors when installing the samplerate module on Linux systems, you can try installing it separately with this command:

pip install samplerate --only-binary=:all:

Then use pyinstaller to build the project:

pyinstaller ./main.spec

Note that the path to the vosk library in main-vosk.spec might be incorrect and needs to be configured according to the actual situation (related to the version of the Python environment).

# Windows

vosk_path = str(Path('./.venv/Lib/site-packages/vosk').resolve())

# Linux or macOS

vosk_path = str(Path('./.venv/lib/python3.x/site-packages/vosk').resolve())

After the build completes, you can find the executable file in the engine/dist folder. Then proceed with subsequent operations.

Run Project

npm run dev

Build Project

# For windows

npm run build:win

# For macOS

npm run build:mac

# For Linux

npm run build:linux